DNS Security, Part III: DANE and the WebPKI

Posted by ekr on 28 Dec 2021

This is Part III of my series on DNS Security. (see Part I for an overview of DNS and its security issues and Part II for background on DNSSEC). In this part, we cover DNS Authentication of Named Entities (DANE), which uses the DNS to authenticate TLS keys.

As I mentioned previously, a lot of the reason that DNSSEC hasn't seen much deployment is that the information it's protecting—principally IP addresses—isn't usually of very high value; if you're really serious about protecting your communications you encrypt them—probably using TLS, which authenticates the server via a certificate rather than by IP address. At the same time, pretty much everyone agrees that the system responsible for issuing TLS certificates (the WebPKI) is a mess. But as my colleague Allan Schiffman used to say, sometimes when you have two problems they solve each other. This brings us to the topic of DANE, which is an attempt to solve these problems together by using the DNS to authenticate TLS certificates, thus replacing the WebPKI's not-great security properties with the nominally better DNSSEC ones and simultaneously providing a stronger use case for DNSSEC deployment.

DANE/TLSA #

DANE actually attempts to address two distinct (and arguably not that closely related) complaints about the WebPKI:

-

That the large number of CAs in the WebPKI makes it insecure.

-

That having to go to a CA to get a certificate is bad.

To accomplish this, DANE can be used by the domain in two modes:

-

Restrictive: this allows the server to restrict the set of valid keys, potentially excluding keys which would otherwise appear in valid certificates. This is intended to address the "I don't trust all the CAs" complaint.

-

Additive: this allows the server to cause the client to accept one or more keys that it would otherwise not accept (because they aren't certified by an acceptable CA). This is intended to address the "I don't want to get talk to a CA" complaint, though it also has the side effect of excluding other keys.

Somewhat confusingly, but understandably from a protocol perspective -- these are both stored in the same DNS record type TLSA which "does not stand for anything; it is just the name of the RRtype", with a "usage" indicator to differentiate them. However, the semantics are very different. To add to the confusion, DANE has two additive modes and two restrictive modes, with one of each referring to end-user certificates and one referring to trust anchors which can sign other certificates. The result is that discussions about DANE tend to be fairly hard to follow unless you are able to remember what use model we are talking about.

Restrictive Modes #

The basic idea with a restrictive mode is to contain misissuance. Because to a first order any CA accepted by the client can issue a certificate for any domain, the security of your site is only as strong as the weakest CA that clients trust and a mistake by some CA you have never heard of can allow an attacker to impersonate your site.

DANE addresses this by allowing the site to publish a list of either:

-

The CAs that are allowed to issue certificates for the domain name in question (presumably the list of CAs that the site operator expects to use).[1](Usage 0)

-

The certificates that are valid for the domain (Usage 1)

When the client connects to the TLS server it compares the certificate it gets from the server and only accepts that certificate if there is a match, either for the CA (Usage 0) or of the end-entity certificate (Usage 1). Importantly, this is a double check: the certificate still needs to be valid according to the ordinary WebPKI standards; these modes are just designed to protect against misissuance but you still need to get a valid certificate.

Conventional wisdom is that it's operationally better to use Usage 0 Advertising the end-entity certificate is a bad idea makes it harder to update that certificate, which you have to do at minimum around once a year[2] and more frequently if you are using a CA like Let's Encrypt which has shorter lifetimes.[3] It can also be also a problem if you have a big server farm and issue new certificates for each server because each certificate will be different. If you advertise the CA certificate (Usage 0), it's still possible that the CA will change its certificate—though this is much less frequent—but if it happens it will cause connections to your site to break.[4]

Additive Modes #

Historically, getting the WebPKI certificate you need to seamlessly host a TLS server which will be has been kind of a pain. The advice in these circumstances used to be that you should just self-sign your certificates and ask users to click through the resulting warnings, but over the past 5-10 years browsers have really started to crack down on that with the result that you now get a big scary warning which people (hopefully) don't want to click through:

This is a good thing for security, as it's very hard for people to evaluate these warnings and know what's safe, but made life much harder for people who didn't want to get a valid certificate. DANE tries to address this by allowing you to tell clients that they should accept your certificate even it can't be validated via the WebPKI. As with the restrictive modes, there are two versions.

-

Usage 2 specifies a certificate authority that will be used as the trust anchor for the TLS server. This overrides the existing trust anchor list for this domain, which means that you can create your own CA and issue yourself certificates without getting it into a browser root store.

-

Usage 3 specifies a specific certificate[5] that is expected to be used by the TLS server. This is conceptually like Usage 2, except that you don't need to spin up your own CA; you can just make a self-signed certificate and bless it using DANE/TLSA.

Note that these modes aren't purely additive, because they also restrict the list of certificates to those authorized by the TLSA records, so they also prevent someone from getting a WebPKI certificate that is valid for your domain.

DANE and DNSSEC #

DANE requires DNSSEC (see RFC 6698; Section 4.1). It should be obvious why the additive modes require it: otherwise an attacker who controlled the DNS could take over your TLS connections, thus undercutting the design goal of being secure against active attackers. The situation with the restrictive modes is somewhat less obvious; an attacker who controls the DNS can already cause failures by providing a bogus IP address. This was a topic of some debate in the DANE WG, but at the end of the day it's easiest to just require DNSSEC.

So what happens if you try to retrieve a TLSA record for example.com

but that fails (for instance if you can't validate the DNSSEC signatures,

or the TLSA record never arrives even though the NSEC record says it ought

to)? The only safe thing to do is to refuse to create the TLS connection. The reason for this is that

the valid TLSA record(s)—assuming there is one and there hasn't

been a misconfiguration—might specify a different certificate from

the one presented by the server; if you can't retrieve the record,

you need to assume the worst and fail the connection.

This creates a problem for endpoints which might have unreliable DNS service, such as browsers. As I mentioned in Part II, browser and OS vendors have been reluctant to turn on DNSSEC validation by default because of concerns about spurious DNSSEC validation failures leading to hard connection failures. The same concerns apply here and no major browser has added support for DANE/TLS (See Adam Langley's 2015 Why not DANE in browsers for his explanation of why Chrome doesn't do DANE.) The situation is somewhat better for endpoints such as mail servers, which tend to have a clearer path to the Internet, and DANE is seeing some usage there, as discussed below.

DANE TLS Extension #

One way to address the problem of DNSSEC network interference is to bypass the DNS service entirely. It's true you need DNS in order to resolve the IP address of the server, but once you've got that, the server can just give you the TLSA records—and their supporting DNSSEC signatures—directly?[6] Because DNSSEC signs objects, those records are self-contained and can be verified no matter how they are delivered. The obvious thing to do here is to just have the server provide its DNSSEC-authenticated TLSA records in the TLS handshake along with the server certificate.

The IETF spent some time developing just such an extension but was unable to reach consensus on the precise semantics[7] and at the end of the day the whole thing kind of just died out due to lack of energy, in part because browsers are where this makes the most difference and no browser was really interested in the extension. Eventually, the extension got published as an RFC in what's called the Independent Stream which roughly means that it was published and has a TLS code point assignment but isn't any kind of standard. To the best of my knowledge, few TLS stacks and no browser supports this extension.

DANE Deployment Status #

When looking at DANE deployment, we should distinguish the situation on the Web from that for e-mail. As noted above, DANE has essentially no deployment on the Web: no browser supports it in either the main DNSSEC or the TLS extension mode, and I'm not aware of any real interest from browsers. I explore the reasons for this below.

It seems like there is somewhat more interest in DANE on the e-mail side. Data collected by Viktor Dukhovni and Wes Hardaker at DNSSEC-Tools, indicates about 17 million DS records and about 3 million DANE protected domains, indicating that DANE deployment is about 1/6 as high as DNSSEC deployment, which is already pretty low. Viktor Dukhovni has also posted some more details of DANE deployment. As you'd expect, most of the deployment is driven by big hosting providers (most likely because they can ensure that their DNSSEC records and TLS configurations are in sync).

Viktor reports:

The number of DANE domains that at some point were listed in Gmail's email transparency report is 557 (this is my ad-hoc criterion for a domain being a large-enough actively used email domain). Of these, 331 are in recent (last 90 days of) reports (see [2] below my signature).

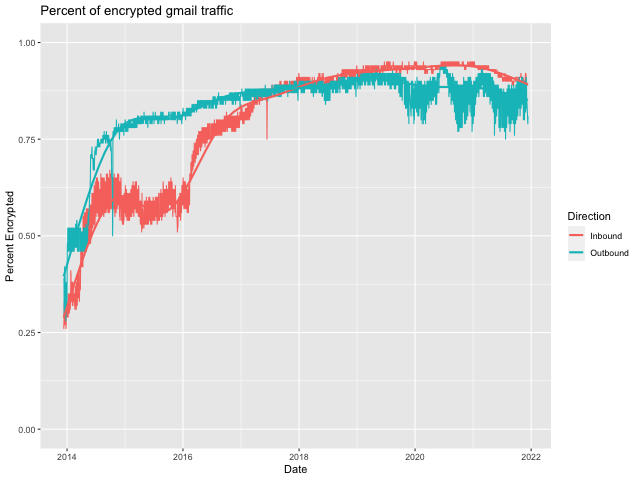

Google's most recent e-mail transparency report has over 92000 domains. They don't publish the fraction of email to and from each domain so it's a bit hard to be sure, but overall this seems like a fairly small fraction. In terms of whether the record is consumed, the situation is mixed. Microsoft has announced that they intend to support TLSA and according to Viktor Dukhovni, they will start processing it for outbound in 2022. Gmail does not and instead uses something called MTA-STS (see below), which just indicates that the recipient wants you to use TLS. This is not to say that there isn't a lot of TLS-encrypted email: Gmail currently reports that 81% of their outgoing email and 89% of their incoming email is encrypted.

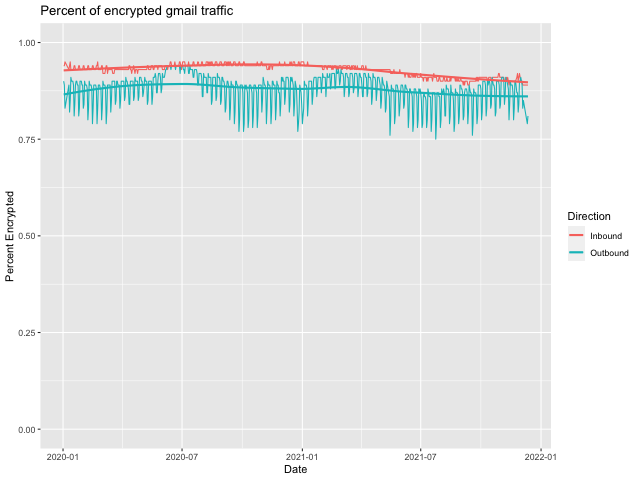

As an aside, do you notice the strong seasonality effects in the "Outbound" direction but not the "Inbound" direction. You can see this even more strongly if we zoom in to just cover 2020 and 2021.

Obviously, we'd need to test this hypothesis, but I believe what we are seeing here is a weekday effect based on business addresses being more likely to use TLS than non-business addresses, and Gmail doing more sending to business addresses during the week. Layered on top of that, we have the decreased use of mail for business towards the end of the year (hence the slump at the end) and then some COVID effects (perhaps increased use of personal addresses for business use?) in mid 2020 and mid 2021.

Why didn't DANE take off? #

In Part II I said that DNSSEC deployment was a collective action problem between clients and servers and the same thing is true here, but between TLS clients and TLS servers. And as with DNSSEC, the basic problem is that supporting DANE doesn't add enough value—and more importantly, incremental value—for implementations. Let's take the restrictive and additive cases separately.

Restrictive Modes #

At first glance, one might think that the restrictive modes would be a pretty good case for DANE. There were a lot of concerns—especially at the time DANE was designed—around certificate misissuance and DANE seemed to offer a solution to that. In his 2015 post, Adam Langley argues that two other technologies are more appropriate here:

-

Certificate Transparency which helps detect misissuance (and prevent covert misissuance) by forcing certificates to be published.

-

HTTP Public Key Pinning (HPKP), which allows Web servers to publish a list of the certificates that were valid and exclude others. (Langley says he is "lukewarm" on HPKP).

Certificate transparency has been quite successful at detecting CA misbehavior, but in the 6 years since Langley's post, HPKP has fallen out of favor, largely due to concerns about misconfiguration: if you accidentally pin to the wrong certificate (say your CA changes its certificate) you can make it impossible for people to reach your site, and because the pins are delivered over the TLS channel, your site is broken until the pins expire or the browser makers take pity on you and remotely invalidate your pin. In the past few years, browser makers have deprecated HPKP.

In principle, DANE's restrictive modes do a better job here because you don't need TLS to work to fix a broken misconfiguration, but it comes at the cost of needing to coordinate your Web server certificates and your DNS, which can be real overhead, especially in cases where your DNS is served by one entity and your Web site is served by another (or maybe several others) who don't cooperate with them. For instance, a common configuration where your site is hosted on a CDN is to have the DNS provider point to the CDN (either by a CNAME or just by IP address), but it doesn't need to know what certificate the CDN has (which it may obtain on its own); with DANE you would need to have a channel to learn the current certificate configuration.

In addition to management overhead, my sense is that people have gotten somewhat less concerned about misissuance, in part due to Certificate Transparency and in part due to some well-publicized examples of misbehaving CAs being removed from the ecosystem, and the resulting sense that the WebPKI is being better operated. However, this means that the restrictive modes aren't as compelling.

TLSA does do one more useful thing, which is to indicate that the client should expect to get TLS with a valid certificate and fail if it doesn't. However, at the time that DANE was designed, the Web already had HSTS which did this in HTTP (and thus was easier to deploy). E-mail recently got something similar in the form of MTA-STS, and this seems to be what many servers such as Gmail are deploying. MTA-STS even has a DNS mode, but because it doesn't contain information about the key, it requires far less coordination between the TLS server and the DNS. It seems like an open question whether we'll end up with MTA-STS, TLSA, or a mix of both.

Additive Modes #

By contrast to the restrictive modes, which solved a real problem—though perhaps not in the way that some people wanted—the value proposition of the additive modes has always been quite unclear to me. The basic story seems to be that it's inconvenient and expensive to deal with the WebPKI CAs and DANE/TLSA offered a convenient and free (and incidentally more secure) alternative. Unfortunately, there are two problems with this story.

The first problem is that it's not actually that inconvenient to get a WebPKI certificate. It's true that it was somewhat inconvenient, but then in late 2015—a little over three years after DANE was published—Let's Encrypt launched a free automatic certificate authority based on the ACME protocol. This meant that anyone could get a free WebPKI certificate that would be acceptable to (almost) every client without any mucking around with the DNS. Moreover, as described above and in more detail by Chung et al., getting DNSSEC added to your domain and populating it with TLSA records wasn't that easy in practice, especially compared to Let's Encrypt, which didn't require any changes to DNS at all.

The second problem is that DANE didn't offer any incremental value. Even if we assume that DANE/TLSA was easier to manage than WebPKI certificates and so an all-DANE world would be better than an all-WebPKI world, the all-DANE world was indefinitely far away. The problem is that a large fraction of clients wouldn't have supported DANE at the time of launch and so you would need a WebPKI certificate in any case until essentially that entire population upgraded. This can take a really long time because the tail of clients who don't update is very long, practical matter you are looking at having to support both DANE/TLSA and WebPKI more or less indefinitely and this is obviously more effort than supporting WebPKI, even if you think that DANE alone would be easier than WebPKI alone.

It's useful to look at Let's Encrypt as a contrast: it's true that it was easier to deploy with Let's Encrypt than certificates from previous WebPKI CAs, but that wouldn't have mattered if no client supported Let's Encrypt's certificates. But instead, Let's Encrypt had a "cross-sign" from an existing certificate authority that clients already trusted, which mean that its certificates were immediately valid. This allowed it to provide incremental value and was critical to its success. In general, it's extraordinarily hard to deploy new systems which require every client to change before you get any value and much easier to deploy systems which give people immediate value from deploying.

What about DNSSEC for the WebPKI? #

One of the most frequent complaints about the WebPKI is that it's method of verifying that a given entity should be issued a certificate is very weak and in fact depends on the DNS. The CA/BF baseline requirements require that the CA validate that the applicant has "ownership or control" of the domain. In practice, what this usually means is that the applicant is able to do one of:

- Make specific changes to the Web site,

such as putting a file in

/.well-known - Receive an email to a site administrator, e.g.,

[email protected] - Making a specific change to the DNS

Of course, all of these involve the CA using the DNS to look up information about the site which means that it is vulnerable to attacks on the DNS. And because the site doesn't yet have a certificate, you can't use HTTPS to protect against those attacks as you normally would. In other words, the security of the WebPKI depends on trusting the security of the CA's DNS resolution, the CA's network, and the routing infrastructure. If any of these are compromised, then the CA can be caused to misissue.

More recently, we have seen a number of mechanisms such as multiple perspective validation deployed to make DNS- and BGP-based attacks more difficult and they can potentially be detected via Certificate Transparency. Still, the situation isn't ideal.

It seems like DNSSEC could potentially help, but the situation is

somewhat complicated. In particular, it's not enough to just have the

CA do DNSSEC verification; even in cases where the domain is signed

and so the IP addresses can be trusted, if the attacker controls the

routing system (or, even worse, the link to the server), then they can

intercept the CA's connection to the server and fake the response,

so it doesn't actually help that much to just secure the DNS.

What's needed to make this work is a way to advertise in the DNS

that the CA should only use the DNS-based mechanisms for validating

control of the domain name; because these will be protected by

DNSSEC, the CA will no longer be subject to routing-based attacks.

Of course, this also requires quite tight control of the DNS by

the server operator, which makes it less attractive for them

(one reason why the HTTP-based challenges are popular).

In any case, this would be a simple extension to DNS (potentially an

addition to CAA) but I don't know how much interest there actually

would be.

It turns out such an extension to ACME already exists, but it does

not seem to be widely deployed.

[Update 2021-12-28: Corrected to document the existence of the extension.

Thanks to Daniel Micay for pointing this out.]

Next Up: DNS Transport Security #

Even if DNSSEC were universally deployed and supported, including validation by endpoints, it would only be a partial answer to DNS security because it doesn't keep the people's queries secret. Your DNS query history leaks much of your Internet history, so we know this is sensitive information and there is already evidence of it being misused by ISPs and probably others. The next post, will cover transport security mechanisms for DNS such as DNS over TLS (DoT), DNS over HTTPS (DoH), and DNS over QUIC (DoQ) that are intended to protect those queries, as well as Oblivious DoH and Oblivious HTTP which protect the client's IP address.

There is another record called Certificate Authority Authorization (CAA) which carries similar information but intended for certificate authorities, telling them that they should not issue for a given domain name. This is intended to help prevent misissuance, but is not consumed by the client and therefore doesn't do anything once misissuance has happened. ↩︎

The CA/Browser Forum Baseline Requirements limit certificate lifetimes to 398 days. ↩︎

TLSA does allow you to advertise the public key of the server, and it's technically possible to get a new certificate but keep the same key. However, if you do that, you're relying on your server software never to generate a new key, which has its own problems. ↩︎

Another more subtle failure mode is that the certificate chain that is constructed for an end-entity certificate can depend on the browser. If you're not careful with which CA certificates you advertise via DANE, you can create hard to diagnose failure modes. ↩︎

You could in principle use a raw public key but TLS really expects to use certificates, so this is what DANE specifies and you're just stuck with some X.509 machinery. ↩︎

Note that this involves ignoring DNSSEC for the IP address, but as I pointed out previously, the security impact of this is minimal. ↩︎

Full disclosure: I was one of the major participants on one side of the debate, which is really too tedious to explain. ↩︎