Why your asthma inhaler is so expensive (in the US)

It starts with the ozone hole...

Posted by ekr on 09 Jun 2026

A little under 10% of the US population suffers from asthma. The good news is we have highly effective treatments. The bad news is that despite these treatments being decades old, they're shockingly expensive in the US as the result of a really bad interaction between the ozone hole, the patent system, the way drugs get priced, and the profit-seeking behavior of drug companies.

Note: This post is really just about the US; the situation is different in other countries, which often have robust price controls.

Asthma and Asthma Treatment #

Asthma is an inflammatory disease in which the patient's airways constrict, often in response to some trigger such as exercise, allergy, or illness, leading to difficulty breathing. There are two front-line treatments for asthma:

-

β2-agonists such as albuterol/salbutamol that induce the muscles of the airway to relax.

-

Corticosteroids such as fluticasone that reduce inflammation in the airways.

These treatments work together, in that some β2-agonists are quick acting and therefore can treat an asthma attack immediately, whereas the corticosteroids reduce your susceptibility to asthma attacks but are not useful to deal with one already in progress. It's quite common for a patient to be on both classes of drugs, taking the corticosteroid daily and then a β2-agonist as needed, for instance before exercise or when they feel that they are having trouble breathing.

These drugs can be delivered systemically but are most commonly inhaled, so that they are delivered directly to the affected tissues. There are at least three major inhalation routes, but historically the most common is via what's called a metered-dose inhaler (MDI) ("puffer") which is basically a specialized kind of spray can that lets you spray the drug right into your lungs.

These drugs are all quite old. The most common β2-agonist, albuterol (in the US)/salbutamol (elsewhere) was patented in 1966, the first inhaled corticosteroid (beclomethasone) was patented in 1976, and the most common inhaled corticosteroid (fluticasone propionate), was patented in 1980, so the basic drug is long out of patent. Unfortunately, this is not the end of the story.

Drug Naming #

When a drug is developed, it typically gets assigned (at least) two names:

- An international nonproprietary name which just describes the compound (e.g., fluticasone). INNs are assigned by the World Health Organization according to a fairly complicated system in which each class of drug has a "stem" prefix or suffix that helps tell you what it is. For instance, "glucagon-like Peptide (GLP) analogues" all end in "glutide".

- A brand name, which is assigned by the manufacturer (e.g., Flovent) and is designed to sound appealing.

When the drug is initially marketed, it will of course use the brand name, but then generics will typically use the INN name, though the drug may also still be sold under the brand name. For instance, you can still buy Advil even though generic ibuprofen is widely available.

Some (mostly older) drugs will also have names in some older national naming system. For example, the β2-agonist salbutamol is known as "albuterol" in the US. Another example is the drug brand-named Tylenol and called acetaminophen in the US, paracetamol outside the US, and

Name Brand and Generics #

The first thing you have to understand is the lifecycle of a drug.

When a new drug is first invented, the manufacturer will generally file for a patent. As a result, once the drug is approved the manufacturer will have some period of exclusivity during which only they can sell the drug. Developing drugs is incredibly expensive, as is the process of testing the drug to determine that it is safe and effective. This system allows the initial inventor to charge monopoly prices during the lifetime of the patent, typically far in excess of the cost of manufacture, thus recouping much of the upfront cost of developing the drug.[1]

Eventually, the patent on the drug will expire, allowing other manufacturers to make the drug themselves, in what's called a generic drug (the one made by the manufacturer is called the name brand drug). Importantly, the FDA allows those other manufacturers to get approval to market the drug without repeating all the studies that the original inventor did. Instead, they just have to go through what's called an abbreviated new drug application (ANDA), in which they demonstrate that the drug is "bioequivalent" (roughly that it delivers the same active ingredient at the same rate and dosage) as the original drug. This isn't to say that the formulation is exactly the same—for instance, a generic tablet might have different coatings or binders—but it's obviously a lot easier to show bioequivalence than the original safety and efficacy trial. Once the generic is approved, it competes with the name brand drug, with the effect of driving the price down towards the marginal cost of production. For obvious reasons, insurers tend to favor generic drugs and there are even state laws that allow or even require pharmacists to substitute generics for name brand drugs unless the prescriber explicitly states not to, thus (hopefully) reducing the risk that the prescriber will write the name brand by habit even when a generic exists.

A very short primer on the US pharma supply chain #

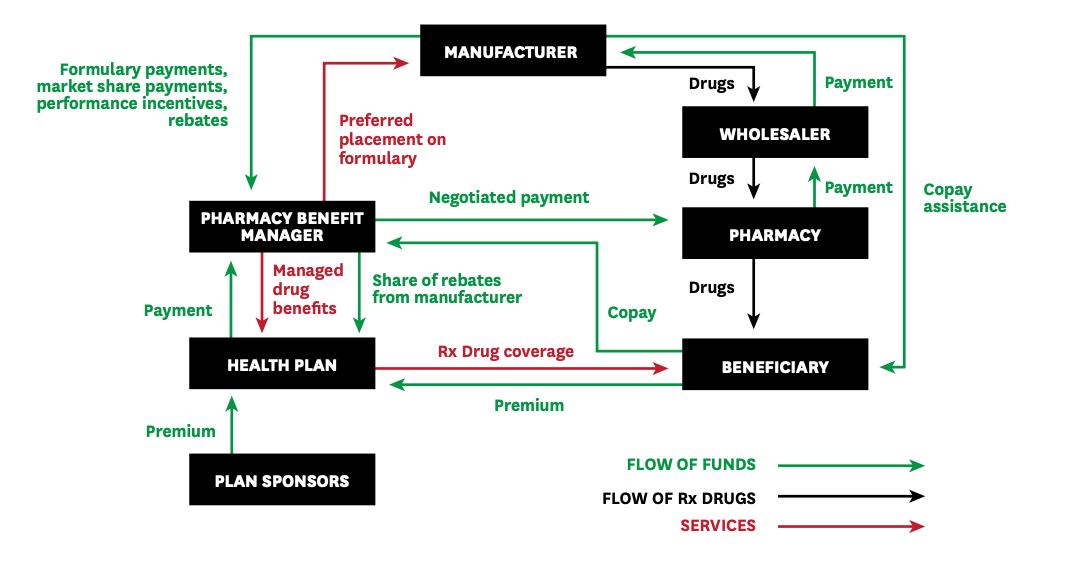

To understand what is happening here, it helps to have some understanding of the pharma supply chain. The figure below, from a report by Sood et al., provides a high level overview:

The pharma supply chain. Source: Sood et al.

Conceptually, drugs are distributed through a multi-tier supply chain from manufacturer to wholesaler to pharmacy (retail) and then ultimately to the user, much like other products (e.g., soda). However, unlike soda, there is a whole parallel financial structure because the end customers (the patients) do not usually pay for the drugs directly, at least not completely. Instead, they will have some insurance plan which pays for much of the drugs, often requiring the patient to pay some kind of copay which covers the remainder of the cost. The price the patient pays is set by the insurer—in part as a function of the arrangements discussed below—and is often independent of the pharmacy the patient has the prescription filled at, so it's not like shopping for other products. In some cases, insurers will have preferred pharmacies and offer patients discounts to use those pharmacies, but as a general matter pharmacies don't compete directly on price delivered to customers; conversely, two patients may pay very different prices for the same drug at the same pharmacy.

Instead of paying the price of the drug directly, patients will typically be asked to pay a fixed copay which is determined based on a tiered system. For instance, here are the co-payments for one of the Blue Cross/Blue Shield plans offered to federal employees:

| Tier | Copay |

|---|---|

| Generic | $5 |

| Preferred brand-name | $35 |

| Non-preferred brand name | 50% of the Plan allowance for each purchase of up to a 90-day supply |

| Preferred specialty | $60 |

At the center of this parallel structure is what's called a pharmacy benefit manager (PBM). As suggested by the name, a PBM is a company which manages the prescription program for the insurance company (their customer), and negotiates with the manufacturer and the pharmacy around pricing and exclusivity. One key tool that the PBMs use is a formulary, which is the list of drugs that are preferred for a given health plan. Generally, the health plan will try to induce the patient to select drugs which are on the formulary, for instance by offering them a lower copay, requiring the patient to try some formulary drug before approving a non-formulary drug, or refusing non-formulary drugs altogether. It's obviously advantageous to the manufacturer to be on the formulary—especially if the competition is not—and so this is an opportunity for price negotiation.

Many of these actual price adjustments happen via rebates; recall that the pharmacy buys their drugs from wholesalers and at that point it's not clear which patient will be getting a given unit. However, the actual price that the patient should be paying—and that should be charged to the health plan—will vary on a patient by patient basis. Rebates paid by the manufacturer to the PBM and then distributed along the supply chain provide differentiated per-customer pricing while allowing the wholesaler and pharmacy to pay fixed prices.

Obviously, this whole structure gives the patient the incentive to choose drugs on the lower tier, but that doesn't mean that their incentives are aligned with those of the health plan and the PBM. For example, consider what happens if the brand name drug is $80 and the generic is $30 but the manufacturer offers the PBM and health plan a rebate to steer the patient towards the brand name drug; the patient can be exposed to a higher co-pay even though the health plan is actually paying less! This is covered in the FTC's report on PBMs:

In addition, our review of a number of contracts and internal documents summarizing such contracts reveals that some rebate contracts explicitly premise high rebates on the exclusion of AB-rated generics. These generic exclusions can be accomplished through “NDC blocks” of generic equivalents—that is, a contractual prohibition on payments for generic drugs, as identified by their National Drug Code or “NDC” number. These findings are consistent with public comments that identify the practice of PBMs preferring higher point-of-sale price branded products over generics, which may raise out-of-pocket costs for patients.

In brand drug manufacturer-PBM rebate contracts, the price of the branded drug to the payer may in some cases be lower than that of the excluded generic product net of rebates, but in other cases, the excluded generic may be a lower net price to the payer. Regardless of whether branded products are less expensive than a generic version net of rebates, agreements that exclude generics and biosimilars raise numerous concerns.

The bottom line here is that the health plans and PBMs are not necessarily incentivized to select drugs which lower the patient's ultimate cost.

Asthma Inhaler Pricing #

The table below (thanks, Gemini!) shows the pricing at GoodRx for albuterol and a number of the most common inhaled corticosteroids. Prices vary a lot but this is reasonably reflective of what you would pay without insurance (though I've seen albuterol as low as $18 at Amazon). These prices are for a single inhaler which is something like 1-4 months supply depending on how high a dose you are on and how often you use it. Note that albuterol and fluticasone are both generic whereas the other drugs are still brand name.

| Medication | Class | Standard GoodRx Price | Link |

|---|---|---|---|

| Albuterol sulfate HFA (Generic, 18g) | β2-agonist | $41.27 | GoodRx |

| Fluticasone propionate HFA (Generic, 110mcg) | inhaled corticosteroid | $181.14 | GoodRx |

| Mometasone (Asmanex HFA, 100mcg) | inhaled corticosteroid | $114.92 | GoodRx |

| Ciclesonide (Alvesco HFA, 80mcg) | inhaled corticosteroid | $281.32 | GoodRx |

| Beclomethasone (Qvar RediHaler, 40mcg/80mcg) | inhaled corticosteroid | $311.20 | GoodRx |

The question you should be asking at this point is: Why is this stuff so expensive???. This is a particularly good question for fluticasone because of the following facts:

- It's generic

- Fluticasone nasal spray is available over the counter and is dirt cheap (~$5 for bottle with 120 sprays at about half the dose of the fluticasone inhaler).

So, if fluticasone is out of patent, why is it so expensive? It's a long story, so buckle up.

CFC vs. HFA inhalers #

As I said above, asthma inhalers are basically fancy spray cans and like other spray cans they work by having an active ingredient (in this case the medication) suspended in a propellant under pressure. When you actuate the valve, the propellant sprays out, carrying the active ingredient with it out of the can and into your lungs. When these inhalers were first designed, they were designed with propellants based on chlorofluorocarbons (CFCs),[2] which are a widely used class of chemicals that, as the name suggests, have chlorine and fluorine bonded to carbon. CFCs are largely non-toxic, non-flammable (fluorine bonds are very strong), and have a convenient boiling point, so they were used for a lot of purposes, including, famously, as a refrigerant in air conditioners and refrigerators.

Unfortunately, it turns out CFCs are actually really bad for the ozone layer. You don't need to care about the chemistry, but the bottom line is that CFCs make their way up into the upper atmosphere where they react with ozone; this is bad news because ozone absorbs ultraviolet light, with the result that more UV light gets to the ground, with negative results on various biological organisms, including you, at least if you don't like having sunburn and skin cancer.

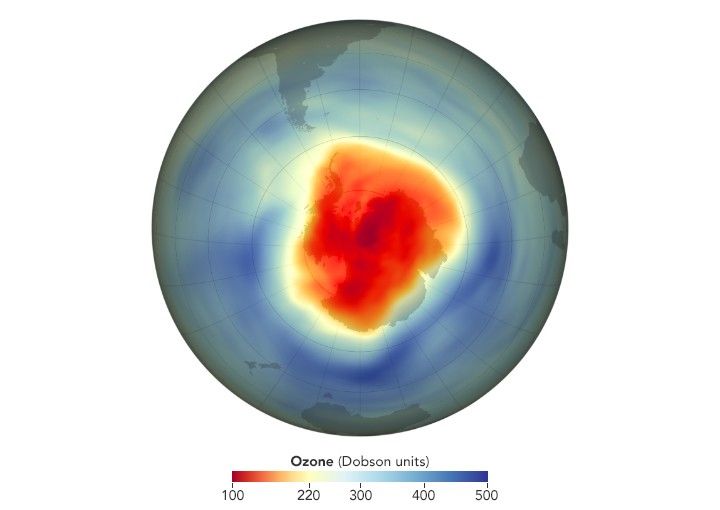

The situation was especially bad over the Antarctic, where there has been some really clear ozone depletion.

Ozone depletion over the antarctic. Source: NASA.

This was obviously bad and in 1989 the Montreal Protocol restricted the use of ozone depleting chemicals. For a while, metered dose inhalers were exempted from these restrictions, but eventually those were restricted as well, with the US restricting the use for albuterol in 1998 and other inhalers between 2004 and 2013.

These restrictions were made possible by the development of inhalers that used new propellants based on hydrofluoroalkanes (HFAs). Once these inhalers were developed, it was considered safe to phase out CFC-based inhalers.

Evergreening #

HFA-based inhalers were good news for the ozone layer but bad for consumers because the manufacturers of those inhalers patented the new formulations, which meant that they were once again able to charge name brand prices. Because albuterol was already out of patent and generics were widely available, this meant that albuterol prices went up:

That's because the new MDIs use a new propellanthydrofluoroalkane, or HFA. The familiar propellant, chlorofluorocarbon (CFC), could be out of production by the end of 2005 because of environmental concerns. The problem is that replacement inhalers powered by HFA cost more. In mid-July, Drugstore.com listed generic 17-gm albuterol MDIs for $14. A similar Proventil (Schering-Plough) MDI listed for $38 and Proventil HFA for $40. Prices for Ventolin (GlaxoSmithKline) MDIs were similar.

A 2015 paper by Jena et al. looked at the real-world cost paid by consumers, finding that:

Results: The mean out-of-pocket albuterol cost rose from $13.60 (95% CI, $13.40-$13.70) per prescription in 2004 to $25.00 (95% CI, $24.80-$25.20) immediately after the 2008 ban. By the end of 2010, costs had lowered to $21.00 (95% CI, $20.80-$21.20) per prescription. Overall albuterol inhaler use steadily declined from 2004 to 2010. Steep declines in use of generic CFC inhalers occurred after the fourth quarter of 2006 and were almost fully offset by increases in use of hydrofluoroalkane inhalers.

By contrast, because fluticasone was invented later and so the patent expired later, there wasn't ever a generic version of fluticasone and GlaxoSmithKline (GSK) was still selling the brand-name version (Flovent). In due course, GSK rolled out Flovent HFA, which was approved in 2004. Naturally GSK obtained patents on Flovent HFA and the final patent expired in February of 2026. Hilariously, this patent isn't even for the drug itself. Instead, it's for the dose counter in the inhaler mechanism. Unfortunately, the FDA's position appears to be that if the original drug has a dose counter, the generic has to as well, so you couldn't just get rid of the dose counter and sell a generic.

Discounts, Rebates, and Authorized Generics #

Of course, it hadn't escaped people's notice that asthma inhalers had gotten really expensive, and in 2024 the Senate Health, Education, Labor, and Pensions (HELP) committee started an investigation into the cost of asthma inhalers.

“There is no rational reason, other than greed, as to why GlaxoSmithKline charges $319 for Advair HFA in the United States, but just $26 for the same inhaler in the United Kingdom,” said Chairman Sanders. “It is unacceptable that Teva is charging Americans with asthma $286 for its QVAR RediHaler that costs just $9 in Germany. It is beyond absurd that Boehringer Ingelheim charges $489 for Combivent Respimat in the United States, but just $7 in France. As Chairman of the Senate HELP Committee, I am conducting an investigation into the efforts of these companies to pump up their profits by artificially inflating and manipulating the price of asthma inhalers that have been on the market for decades. The United States cannot continue to pay, by far, the highest prices in the world for prescription drugs.”

The manufacturers responded by announcing discount plans capping the cost of their drugs at $35/month (here's GSK's), though it's not clear to me what fraction of people were taking advantage of these coupon deals (I hadn't heard of them until recently).

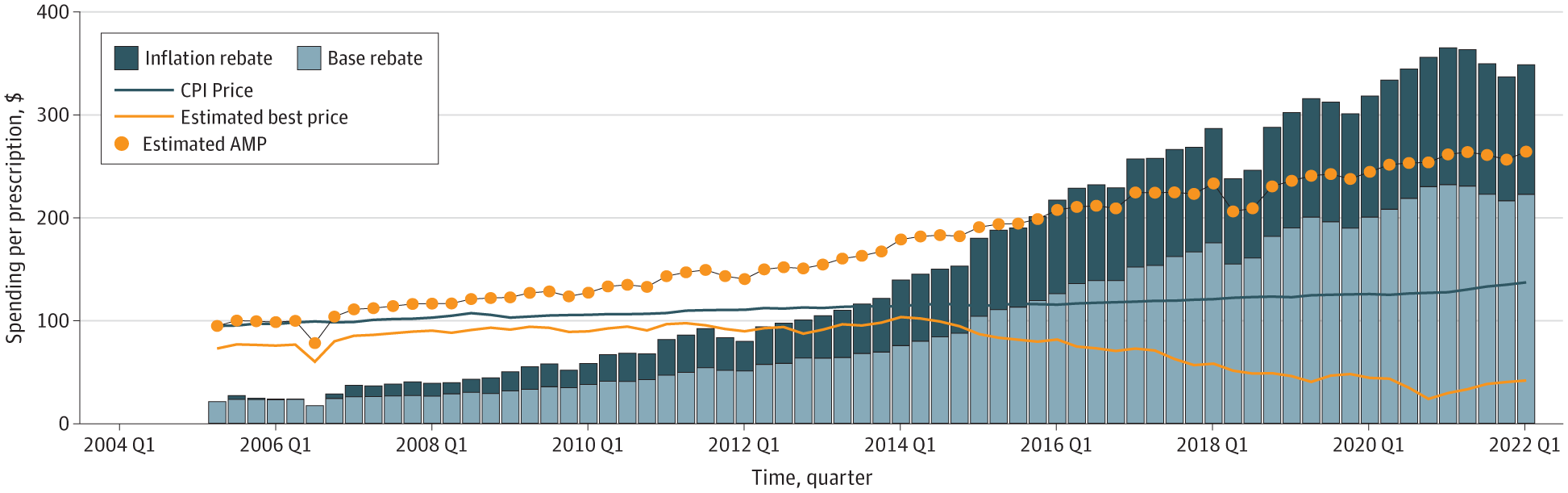

An additional factor here is the rebates that drug manufacturers are required to pay Medicaid. These rebates are based on both (1) the difference between what medicaid is paying and the average manufacturer price (AMP) (the wholesale price) and (2) the rate at which the AMP has risen over time compared to inflation. Until 2024, these rebates were capped at 100% of the AMP, but the American Rescue Plan (2021) lifted those caps as of 2024. The price of Flovent has increased quite dramatically over the past 20 years (see the figure below), with the result that the manufacturer (GSK) would potentially be on the hook for a very large rebate to the government (Levy, Socal, and Ballreich, 2024 estimate an additional $367 million on top of the ~$1B they already paid).

Estimated flovent pricing over time. Source: Levy, Socal, and Ballreich, 2024

In the event, GSK turned to what Levy, Socal, and Ballreich call a "strategic manufacture response", or what you might call a "loophole". They worked with a company called Prasco to launch what's called an "authorized generic" version of Flovent. Unlike a regular generic, which is made by a third party and must be bioequivalent, an authorized generic is the same as the brand name drug, but sold in non brand-name packaging. According to Xeteor, it's not just the same drug, it's actually made in the same factory as Flovent was:

This is the most common question patients ask when switching to a Prasco generic. Because Prasco sells authorized generics, Prasco drugs are typically manufactured in the exact same facilities as the original brand-name drugs.

Prasco does not formulate these medications in a separate, lower-cost factory. They partner with massive pharmaceutical giants like GSK, AstraZeneca, and Eli Lilly. For example, if you buy the Prasco authorized generic for a GSK inhaler, it was manufactured on GSK's assembly line alongside the brand-name versions. It passes the exact same quality control standards before being placed in a Prasco box.

The authorized generic fluticasone launched in 2022 and then in 2024, GSK stopped making Flovent entirely, which meant that the only fluticasone inhaler you could get was the Prasco authorized generic. The list price of the authorized generic was lower than Flovent, but still quite high. This had a number of undesirable (from the consumer perspective) side effects:

-

Whatever discounts the pharmacy benefit managers (PBMs) had negotiated with GSK for Flovent no longer applied and in some cases the PBMs responded by taking the authorized generic off the formulary, so patients had to pay more in co-pay.

-

Because the $35 rebate coupons were tied to the brand name version, they suddenly no longer applied, and fluticasone users were exposed to the full price rather than the $35 limit.

-

Because there was no history of pricing to go by, Prasco didn't have to pay the increased rebates based on GSK having increased the price faster than inflation.

Of course, Prasco doesn't get to call it Flovent, but that doesn't really matter because nobody can buy Flovent any more and now all the mechanisms designed to steer people towards generics are working for GSK/Prasco instead of against them by steering people towards the authorized generic. In May 2024, Senator Maggie Hassan sent a letter to GSK pointing out the negative consequences of this change, but as of this writing the situation remains the same.

Other Fluticasone Inhalers #

Although GSK has discontinued Flovent, they still make another fluticasone-based inhaler called Arnuity Ellipta. Ellipta differs from Flovent in two main ways:

-

It's a different form of fluticasone, namely fluticasone furoate, which is longer-lasting and thus more suitable for once a day dosing.

-

Ellipta is what's called a dry powder inhaler (DPI), which means that instead of having an aerosol propellant, you inhale a powder into your lungs.

There are some technical advantages to DPIs,[3] but from the pricing perspective there are two big disadvantages to Arnuity Ellipta:

-

It's still under patent.[4]

-

It only carries 30 doses, which means 30 days at once a day dosing. By contrast, a typical fluticasone inhaler carries 120 doses, so can often be used for 60 days at the recommended twice daily dosing or even 120 days if you do once daily, which appears to be fine if your asthma is already well controlled.[5]

As with Flovent, GSK offers a $35/unit coupon for Arnuity Ellipta, but because of point (2) above, it's still more expensive than Flovent would have been with the $35/coupon. Recently, Prasco launched an authorized generic version of Arnuity Ellipta, so it will be interesting to see if GSK discontinues the name-brand version as they did with Flovent HFA.

True Generics #

The one bright spot here is that now that all the patents for Flovent have expired, we can see true generics. In March 2026, Glenmark Pharmaceuticals got approval for a generic fluticasone HFA inhaler, but unfortunately only at the lowest dose (44mcg). The generic process gives the first manufacturer to get approval a 180-day exclusivity period after which any manufacturer will be able to get approval for their generic, so presumably towards the end of this year we'll start to see some of the other big generic manufacturers get into the game and finally see true competition in this market, with a corresponding drop in price.

How deep the rabbit hole goes #

I've focused here on asthma medications and inhaled corticosteroids in particular, but actually there are a whole pile of practices that drug manufacturers use to extend the period of exclusivity they enjoy.

Chemical Variants #

One common approach is to develop a new drug that is closely related to the existing drug. In some cases, this is a genuine advance with better properties, as it's common for one drug in a class to be invented and then others with improved properties are rolled out as manufacturers get a clearer picture of what works and what doesn't (think of all the different penicillin variants), but in other cases the value is much less clear.

Stereoisomers and the Chiral Switch #

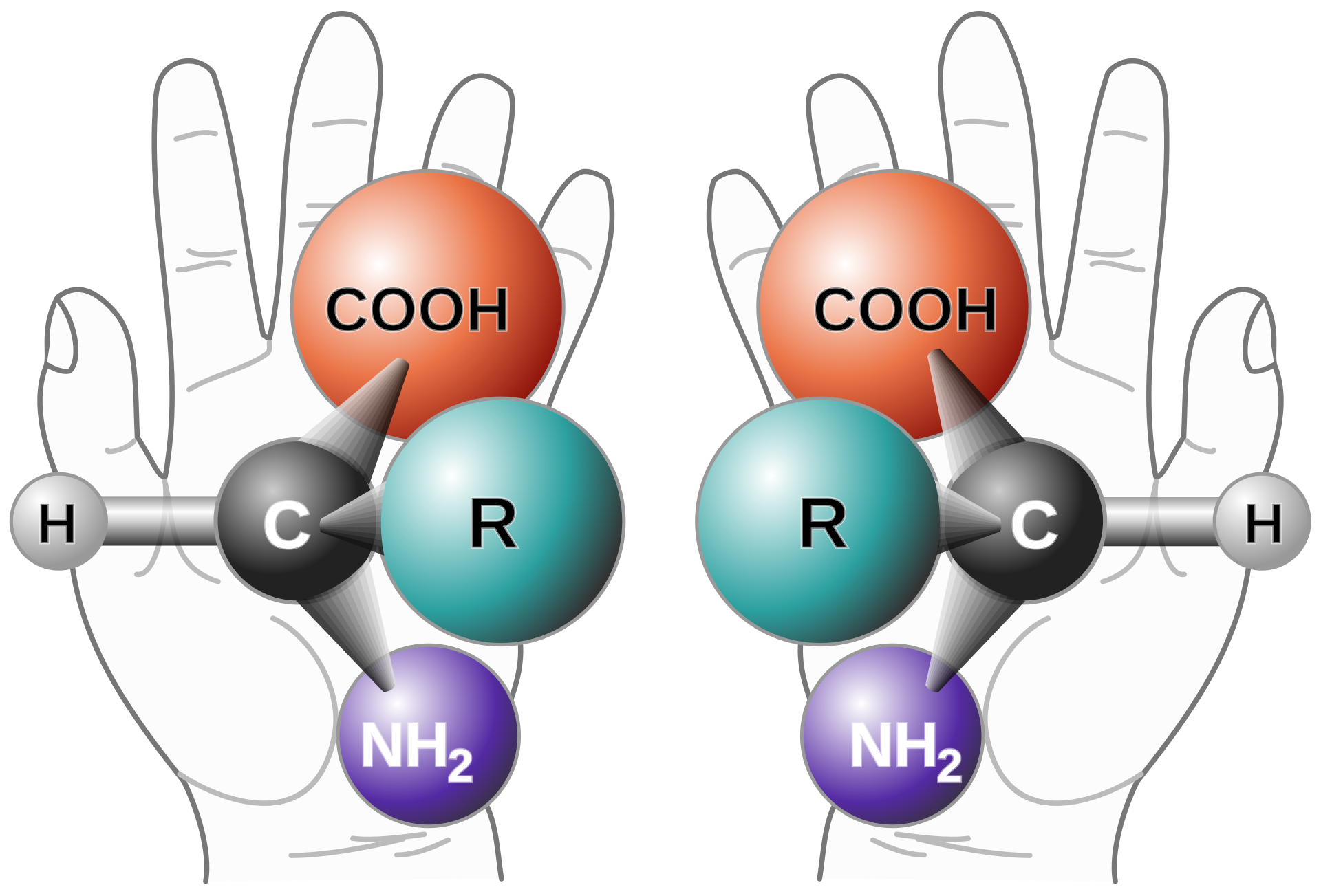

For example, many molecules are chiral, which is to say that they have two variants that are mirror images and cannot be superimposed upon each other, in the same way that your left and right hands are mirror images. The two versions of the molecule are called "enantiomers" or "stereoisomers".

Not uncommonly, the two enantiomers of a molecule will have different chemical effects on the body. For example, your body can digest one enantiomer of glucose (dextrose) but not the other (l-glucose). Much more unfortunately, thalidomide was marketed as a morning sickness drug and consisted of two isomers, with one version helping treat nausea but the other version causing severe birth defects.

Often, however, both enantiomers are active or one is active and the other just innocuous. In such cases, if it is more convenient chemically to synthesize both enantiomers in an equal mixture (a racemate), the manufacturer will just do that and market the mixture rather than the active enantiomer. This has the additional benefit of creating an opportunity for the manufacturer to produce a "new" drug that just consists of the active enantiomer and get new patent protection for that, in what's called the "chiral switch". Some examples of this approach include:

| Original | Pure enantiomer | Drug type |

|---|---|---|

| Prilosec | Nexium | Proton pump inhibitor |

| Albuterol | Levalbuterol | β2 agonist |

| Citalopram | Escitalopram | SSRI |

The typical argument for the chiral switch is that one enantiomer might have some undesirable side effects that you can avoid by removing it. For instance, the idea behind levalbuterol was that the S enantiomer of albuterol had a greater stimulating effect on the heart, and so by removing it you could get the bronchodilating effect with fewer cardiac effects. The available evidence does not seem to support there being a large effect in practice, however.

Sometimes the chiral switch actually does make a big difference, though. For example, the Parkinson's drug dopa comes in two variants, l-dopa and d-dopa. d-dopa has a number of negative side effects and so pure l-dopa is preferred for treatment. Unfortunately for the case of thalidomide, you can't just give people the safe enantiomer, because it is converted into the other enantiomer in the body.

Prodrugs #

It's not uncommon for a drug to itself be biologically inactive but to be converted into something that is active in the body. In these cases, the inactive version is called a "prodrug" for the active version. For example, the anti-allergy drug loratadine (Claritin) is converted into desloratadine in your liver, and it's desloratadine which has the desired effect. This provides another opportunity to get two drugs for the price of one, and Schering duly rolled out Clarinex (desloratidine) 13 years after they first rolled out Claritin.

You can also go the other way: the popular anti-ADHD drug lisdexamfetamine (Vyvanse) is just the prodrug of dextroamphetamine (Dexedrine). The idea here is that the process of metabolizing lisdexamfetamine to dexamphetamine provides extended release with less potential for abuse. There seems to be some evidence that this is actually true, though the effect also seems to be pretty modest.

Additional Indications #

When the FDA approves drugs, they are approved to treat specific conditions ("indications"). This requires the manufacturer to produce studies demonstrating that the drug is "safe and effective". The manufacturer is limited to marketing the drug for those indications, but once a drug is approved, doctors can prescribe it for other conditions as well, in what is called "off-label" prescribing. However, manufacturers can perform new studies and use them to get approval for other indications.

A good example here is semaglutide (Ozembic) which was approved for management of diabetes but was prescribed off-label as a weight loss drug. Eventually, Novo Nordisk got the drug approved for weight loss, where it's sold under the name Wegovy. As part of that process, Novo Nordisk acquired a new patent for using semaglutide for weight management; this patent doesn't expire until 2038!

Partially overlapping patent lifetimes put the manufacturer in a somewhat tricky position because it's possible for the patent to expire on the compound itself, thus allowing for generic sales, but for the patent on additional indications to still be valid. Technically, this means that it's not supposed to be used for those indications, but of course doctors are likely to do it anyway, and going after individual doctors for patent infringement doesn't really scale, and—at least in the US—the name brand manufacturer can't sue the generic manufacturer for contributory infringement even if the generic manufacturer knows the drug is being used off-label in this way. In theory, they could go after the generic manufacturer for "actively inducing" infringement, for instance if they actually marketed the drug for the patented case,[6] but, as determined in the recent US Supreme Court Hikma Pharmaceuticals USA Inc. v. Amarin Pharma, Inc. decision, it's not actively inducing infringement to just tell people that the generic is the same as the brand name drug and letting them figure it out for themselves.

The bigger picture #

The underlying dynamic here is that pharmaceuticals are incredibly expensive to develop and test but usually have a very low marginal cost of production (kind of like software). The way we have decided to manage this situation is by giving pharma companies a temporary monopoly on the sale of the resulting drug in the form of a patent, allowing them to extract monopoly rents above the cost of production during the life of the patent. However, software is primarily protected by copyright, which has a practically unlimited duration, whereas patents have a comparatively short duration, which gives pharma companies a strong incentive to figure out how to extend the patent lifetime.

The second problem is the complicated structure of the US drug pricing system, which does not always act in the interest of patients, in terms of exerting downward pressure on drug prices generally, aligning the interests of patients and PBMs/health plans, and providing transparent pricing that supports good patient and doctor decision making. The result is that patients often pay higher prices than they should—and often much higher than in peer countries—as well as often having to exert much more effort navigating the maze of different drug choices and prices. This burden falls heaviest on patients with less good insurance plans who are exposed to more of the cost of prescription drugs and lightest on patients who have good insurance and can largely ignore drug prices (at least until they want something unusual). The result is an opaque and inefficient system that provides drug manufacturers with large profits and is also very difficult to change.

In this respect, drug development is much like software, where the marginal cost of production is zero. ↩︎

Ironically, CFCs were invented by Thomas Midgley Jr. who was also to a great extent responsible for the development of leaded gasoline, leading J.R. McNeill to describe him as having a "more adverse impact on the atmosphere than any other single organism in Earth's history." (quote from Wikipedia). ↩︎

For example, HFAs are greenhouse gases. ↩︎

Once again, the chemical patent has expired, but some of the patents on the mechanisms remain. ↩︎

The 30 day dosing thing appears to be a genuine limitation, not just shrinkflation. Basically, the DPI depends on the powder being, well, dry, and once you've opened the packaging, the inhaler itself starts to pick up moisture and there's an increased risk of clumping, even though the doses themselves are individually packaged. As a result, you can't just add more doses to the inhaler. ↩︎

The generic manufacturer is required to put out the drug with what's called a "skinny label" that only lists the non-patented indications. ↩︎